Project Discription

24-October-2023

The Gesture Control for YouTube application utilizes a combination of hand tracking, image processing, and machine learning techniques to enable hands-free control of YouTube videos through hand gestures. Here is a brief explanation of how it works:

- Hand Tracking: The application uses computer vision algorithms, specifically the OpenCV library and the cvzone.HandTrackingModule, to detect and track the user's hand in real-time. It analyzes the video feed from a webcam and identifies the hand's position, movements, and other relevant features.

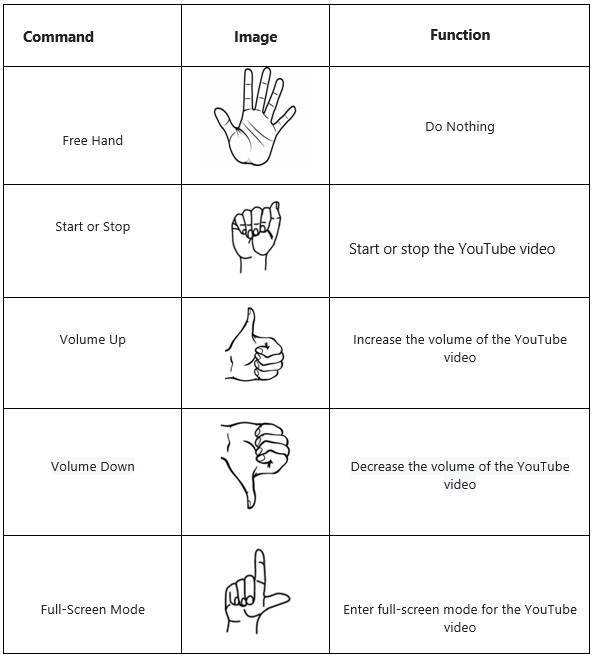

- Gesture Recognition: A trained machine learning model, implemented using the cvzone.ClassificationModule, is employed to recognize different hand gestures. The model has been trained on labeled data, enabling it to classify gestures such as "free hand," "right," "left," "v_up" (volume up), "v_down" (volume down), "max" (fullscreen), "min" (exit fullscreen), and "stop" (pause/play).

YouTube Playback Control: Once the application recognizes a specific hand gesture, it triggers the corresponding action for YouTube playback control. For example, if the "v_up" gesture is detected, it increases the volume; if "max" is detected, it enters fullscreen mode; if "stop" is detected, it pauses or resumes the video, and so on. These actions are simulated using the pyautogui library, which emulates keyboard inputs.

Other Detail

Software Requirement :

Python

Hardware Requirement : Webcam, 4 GB RAM, Processor 2.0 or above

Project Attachement

|

Doc

|

|

|

Document File

|

|

Source Code

|

|

|

Complete source code of project

|